Research overview:

Discovering Causal Knowledge:

Discovering causal relationships from observational data is a fundamental challenge across science, engineering, and ML/AI. My work focuses on automated causal discovery (i.e., causal network learning) in complex environments, with an emphasis on providing theoretical guarantees. Specifically, we are tackling causal discovery in the presence of distribution shifts, hidden confounders, selection bias, nonlinear causal mechanisms, measurement errors, and missing values. Additionally, we are extending causal discovery algorithms to handle large-scale problems. Our goal is to develop a unified framework for causal discovery that is reliable, broadly applicable, and scalable in real-world scenarios.

Leveraging Causality to Empower ML/AI and Foundation Models:

Furthermore, we have been striving to answer: how does causal understanding contribute to artificial general intelligence (AGI)? By integrating causal reasoning, we can significantly elevate the capabilities of both machine learning and foundation models, making them more robust, interpretable, and effective in real-world scenarios. Causal understanding enables these models to move beyond surface-level correlations, allowing them to grasp the underlying mechanisms that drive their outputs. This capability is particularly valuable in addressing fundamental challenges such as distribution shifts, hidden variables, and spurious associations—common obstacles in tasks like classification, clustering, forecasting in nonstationary environments, reinforcement learning, transfer learning, representation learning, and continual learning.

Furthermore, with a deep understanding of causality, foundation models can explore and infer causal mechanisms that may not yet be fully understood or recognized by humans. This empowers them to generate new insights, uncover hidden relationships, and solve problems in ways that could surpass current human expertise. By embedding causal reasoning into foundation models, we aim to enable them to go beyond the limitations of the data they are trained on and make informed decisions that account for the true underlying causes of observed phenomena.

In the broader pursuit of AGI, causal understanding is not merely a tool for enhancing model performance—it is a gateway to breakthroughs that push the boundaries of what is possible. Foundation models equipped with causal understanding can evolve from powerful computational tools into systems capable of generating new knowledge and solving complex, real-world problems in ways that might even exceed human capabilities.

Research highlights:

Causal Discovery in the Presence of Distribution Shifts

It is commonplace to encounter heterogeneous or nonstationary data, of which the underlying generating process changes across domains or over time. Such a distribution shift feature presents both challenges and opportunities for causal discovery, as well as for various ML tasks. [Click for more info…]

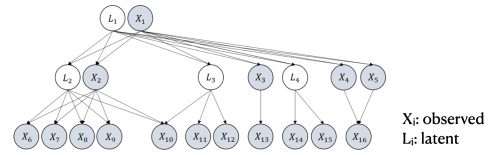

Causal Discovery with Hidden Confounders / Identifying Hidden Causal World

In many cases, the common assumption in causal discovery algorithms–no latent confounders–may not hold. For example, in complex systems, it is usually hard to enumerate and measure all task-related variables, so there may exist latent variables that influence multiple measured variables, the ignorance of which may introduce spurious correlations among measured variables. Moreover, there could be complex causal relationships among latent variables as well. [Click for more info…]

Causal Representation Learning

Causal representation learning aims to unveil latent high-level causal representations from observed low-level data, such as image pixels. One of its primary tasks is to provide reliable assurance of identifying these latent causal models, known as identifiability. A recent breakthrough explores identifiability by leveraging the change of causal influences among latent causal variables across multiple environments. [Click for more info…]

Causality-Facilitated Reinforcement Learning

By incorporating causal insights, RL agents can better understand the underlying cause-and-effect relationships within their environments, leading to more effective exploration, faster learning, and improved generalization across tasks. This strategy also increases the robustness of agents in adapting to distribution shifts and varying conditions, making them more efficient and reliable in complex real-world applications. [Click for more info…]

Causality-Facilitated Transfer Learning / Domain Generalization

Traditional machine learning models often struggle when applied to new, unseen environments because they tend to learn associations that are specific to the training data and not necessarily robust across varying conditions. This is where causality comes in. By incorporating causal relationships into the learning process, causality-facilitated methods aim to identify and focus on the underlying mechanisms that generate the data rather than merely capturing superficial correlations. This leads to models that are more resilient to changes in the data distribution, making them better suited for transfer learning, where a model trained in one domain is applied to a different but related domain, and for domain generalization, where a model is expected to perform well across multiple unseen domains. [Click for more info…]

Causal Discovery with General Nonlinear Data

In real-world scenarios, causal relationships can often be nonlinear. How can we effectively address these generally nonlinear causal relations? [Click for more info…]

Causal Discovery In the Presence of Selection Bias

Selection bias is an important issue in statistical inference. Ideally, samples should be drawn randomly from the population of interest. In reality, however, it is commonplace that the probability of including a unit in the sample depends on some attributes of the unit. Such selection bias, if not corrected, often distorts the results of causal analysis. [Click for more info…]